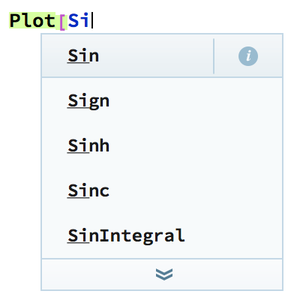

Autocomplete Programs

Neural networks can be trained to generate text. This example demonstrates how to use a pre-trained language model to complete C programs.

Load a "C" language model from the Wolfram Neural Net Repository.

Write a function to complete each sequence with the most probable next characters and update its probability accordingly.

Use this update function to implement a beam search that builds and selects growing sequences of suggestions until the next delimiter (the space character) is met.

Generate a list of the 10 best completion suggestions.

Display on a grid with their probabilities according to the language model.